I am introducing my most recent research on log-normal stochastic volatility model with applications to assets with positive implied volatility skews, such as VIX index, short index ETFs, cryptocurrencies, and some commodities.

Together with Parviz Rakhmonov, we have extended my early work on Karasinski-Sepp log-normal volatility model and we have written an extensive paper with an extra focus on modelling implied volatilities of assets with positive return-volatility correlation in addition to deriving a closed-form solution for option valuation under this model.

Assets with positive implied volatility skews and return-volatility correlations

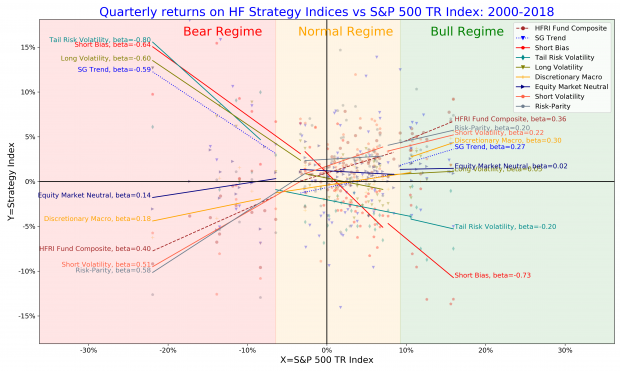

While it is typical to observe negative correlation between returns of an asset and changes in its implied and realized volatilities, there are in fact many assets with positive return-volatility correlation and, as a consequence, with positive implied volatility skews. In below Figure, I show some representative examples.

(A) The VIX index provides protection against corrections in the S&P 500 index, so that out-of-the-money calls on VIX futures are valuable and demand extra risk-premia than puts.

(B) Short and leveraged short ETFs on equity indices have positive implied volatility skews because of their anti-correlation with underlying equity indices. I use 3x Short Nasdaq ETF with NYSE ticker SQQQ, which is the largest short ETF in US equity market and which has very liquid listed options market.

(C) Cryptocurrencies, including Bitcoin and Ethereum, and “meme” stocks, such as AMC, have positive skews during speculative phases when positive returns feed speculative demand for upside. These self-feeding price dynamics increase the demand for calls following a period of rising prices. However, positive return-volatility correlation tend to reverse once “greed” regime is over and “risk-off” regime prevails.

(D) Gold and commodities in general may have positive volatility skews dependent on supply-demand imbalances, seasonality, etc.

Importantly, the valuation of options on these assets is not feasible using conventional stochastic volatility models applied in practice such as Heston, SABR, Exponential Ornstein-Uhlenbeck stochastic volatility models, because these models fail to be arbitrage-free (forwards and call prices are not martingals). Curiously enough, the topic of no-arbitrage for SV models with positive return-volatility correlation has not received attention in literature, despite a large number of assets with positive return-volatility correlation.

Importantly, the valuation of options on these assets is not feasible using conventional stochastic volatility models applied in practice such as Heston, SABR, Exponential Ornstein-Uhlenbeck stochastic volatility models, because these models fail to be arbitrage-free (forwards and call prices are not martingals). Curiously enough, the topic of no-arbitrage for SV models with positive return-volatility correlation has not received attention in literature, despite a large number of assets with positive return-volatility correlation.

Applications to Options on Cryptocurrencies

Additional, yet important application of our work is the pricing of options on cryptocurrencies, where call and put options with inverse pay-offs are dominant. The advantage of inverse pay-offs for cryptocurrency markets is that all option-related transactions can be handled using units of underlying cryptocurrencies, such as Bitcoin or Ethereum, without using fiat currencies. Critically, since both inverse options (traded on Deribit exchange) and vanilla (traded on CBOE) are traded for cryptocurrencies, a stochastic volatility must satisfy the martingale condition for both money-market-account and inverse measures to exclude arbitrage opportunities between vanilla and inverse options. We show that prices dynamics in our model are martingales under the both inverse and money-market-account measures.

In below Figure, I show the model fit to Bitcoin options observed on 21-Oct-2021 (the period with positive skew) for most liquid maturities of 2 weeks, 1 month, and 2 and 3 months. We see that the model calibrated to Bitcoin options data is able to capture the market implied skew very well across most liquid maturities with only 5 model parameters. The average mean squared error (MSE) is about 1% in implied volatilities, which is mostly within the quoted bid-ask spread. Calibration to ATM region can be further improved using a term structure of the mean volatility or augmenting the SV model with a local volatility part to fit accurately to the implied volatility surface.

Model applications

The quality of model fit is similar for other assets with either positive or negative skews. The main strength of our model is that it can be used for the following purposes.

- Cross-sectional no-arbitrage model for different exchanges and options referencing the same underlying.

- Model for time series analysis of implied volatility surfaces.

- Dynamic valuation model for structured products and option books.

Further resources

SSRN paper Log-normal Stochastic Volatility Model with Quadratic Drift https://ssrn.com/abstract=2522425

Github project with the example of model implementation in Python: https://github.com/ArturSepp/StochVolModels

Youtube video with lecture I made at Imperial College for model applications for Bitcoin volatility surfaces: https://youtu.be/dv1w_H7NWfQ

Youtube podcast with introduction of the paper and review of Github project with Python analytics for model implementation: https://youtu.be/YHgw0zyzT14

Disclaimer

The views and opinions presented in this article and post are mine alone. This research is not an investment advice.